The problem goes thus: there is a game that costs X dollars to play, which simply involves tossing a coin. You start with a pot $2, and every time the coin comes up heads the banker doubles the pot. As soon as the coin comes up tails the game ends, and you get to walk away with the pot. The question is, how much is a reasonable amount of money X to play the game?

Where the paradox comes in is how statistics defines 'fair'. Usually we calculate the average, or "expected" amount of money to be made from the game, by totalling up all of the possibilities combined with how much we expect to make from them. In this game, we have a 50:50 chance of getting $2 (the first throw being a tail), and then a 1/4 chance of getting $4 (a head, then a tail), then 1/8 chance of getting $8 (heads, heads, tails) and so on. That means we can expect on average $1 from the worst-case scenario (it's $2, and happens half the time, and $2 x 1/2 = 1), and another $1 from the heads-tails scenario ($4 x 1/4 = $1) and so on. This process goes on forever - it's always possible to get more heads - so the average amount we expect to win in this game is $1 + $1 + $1 + .... = infinite money, and that's how much we should apparently spend to play the game.

This obviously doesn't make sense. For a start, you're always going to lose at some point, so it's physically impossible for you to make infinite money no matter how many times you get heads. The problem is that the idea of an expected amount of money depends on the assumption that we want to know what happens in the long run, so it assumes we are playing this game infinitely many times and taking the average. But when we play infinitely many times, we suddenly have access to the end of the rainbow where we're making infinite money - the idea is that infinity is a mathematical construct that we never see in reality. Usually we can deal with it pretty happily without weird things happening, but this is a weird game, and breaks our usual assumptions.

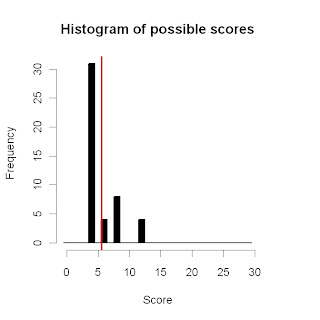

What we can do instead is see what's most likely to happen to our winnings as we keep playing. For a single game, it's pretty clear that most of the time we'll win either $2 or $4 (with a 50% and 25% chance respectively), and occasionally $8 (12.5%) but we're not likely to win much more than that. If we play two games, then our worst case scenario is that we'll win $4, with a 1/2 x 1/2 = 1/4 chance. There are two ways we can win $6 - we can win $2 then $4, or $4 then $2. Both of these options have a 1/2 x 1/4 = 1/8 chance of happening, so overall we've got 1/4 chance of that happening too. We can calculate the other possibilities that way too - obviously we have to stop at some point, but we can go far enough to get a decent idea. We can then keep going and see what happens when we play more and more games in a row, and getting bigger jackpots gets more and more likely.

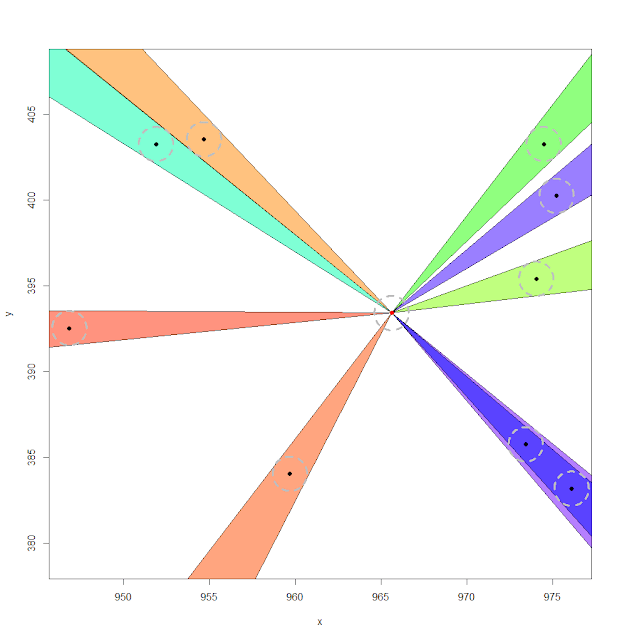

Of course, the best way to do this is with a computer to avoid all those pesky calculations. Here is a graph of the possibilities over the course of 100 games:

Lighter colours represents where a possibility is relatively likely, and dark colours where it is unlikely. You can see little waves towards the top-left of the graph - this is where after a few games there's a small but decent chance of getting a single big win which overwhelms all of the other winnings. Especially when not many games have been played, it's more likely that you'll get a single big win and a lot of small wins than multiple medium-sized wins.

The blue line represents the median average win, and is surrounded by red interquartile lines - the idea is that half of the time, your winnings per game after a certain number of games will be between the two red lines. For example, after 50 games, it's 50-50 whether your average winnings are above or below $8.20 (the median), and half the time your average winnings will be between $6.12 and $12.44. So if you paid only $6 a game, you're probably doing pretty well at this point!

The most important part of this graph is that these numbers are going up as we keep playing games, meaning that the game becomes more and more reliably profitable. Further along the graph, the computer can no longer keep track of the higher numbers of winnings (which is why the red line disappears) so we need to find another way to work out what happens with more than 100 games. Using results cited in this paper, we can actually estimate the median winnings as

$2.55 + log2(number of games)

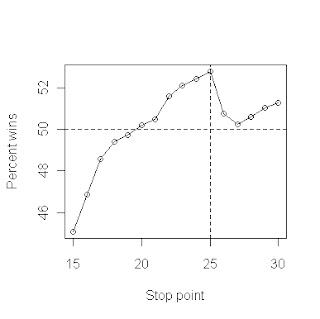

So after 100 games, $9.20 looks like a reasonable price - paying that price, half the time we'll end up ahead, the other half we won't. Note that the distribution is what statisticians call skewed - even though we only come out ahead half the time after 50 games, the "good" half is a lot better than the "bad" half is bad.

Let's say that we really want to milk this game for all it's worth, and we've found a game online that we can make our computer play for us. If we can play a million games a second, and leave our computer running for a year, that's over 30 trillion games. If we put that into our formula, we get a median win of $47.40 per game. If we paid that much per game to play, we'd expect to lose a lot of money at the start but make it back as the games wore on and we got more and more jackpots, breaking even after a year. However, if we only paid $9.20 as before, we'd expect to be doing ok by 100 games (i.e. after 100 microseconds), and by the time our program had been running for a year, we'd be looking at profits around $1200 trillion dollars - 700 times Australia's GDP and enough to basically rule the world.

Unfortunately, no casino will ever host this game, online or otherwise, for exactly this reason. Sooner or later, the house will always lose.